I’ve been in and around media ad sales long enough to have opinions that aren’t popular.

I believe most “data strategy” projects don’t move revenue. I believe most AI pilots die in year two. I believe the gap between what ad sales technology promises and what it delivers is large enough to have made a lot of consultants very comfortable.

But I also believe – with specificity, because I’ve seen the outcomes – that there are a small number of intelligence investments that compound year after year and fundamentally change what a publisher can charge, how fast their sellers close, and how reliably their platform performs at scale.

I’ve spent 14 years learning the difference. Working inside NBCUniversal’s $8B AdSales ecosystem, through the transition from linear to streaming, through multiple upfront cycles, through the Paris Olympics and the Super Bowl and every tentpole in between.

What follows are the five things I know – with confidence, from experience – actually move revenue at publisher scale. Not in theory. In production.

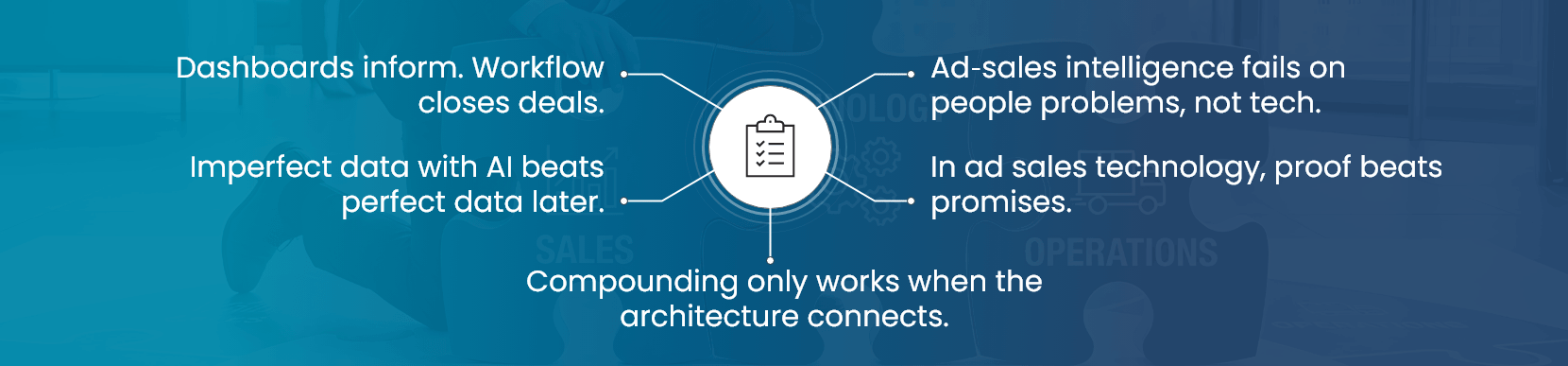

Lesson One: Data Without Workflow Integration Is a Dashboard. And Dashboards Don’t Close Deals.

The first version of almost every publisher data project produces a dashboard. It’s usually a good dashboard. It consolidates data that was previously scattered. It produces insights nobody had seen in one place before. And it gets minimal use after the first month.

The reason is simple: a dashboard requires a user with time, intention, and the discipline to translate what they see into an action. In ad sales, the people with the most valuable decisions to make are also the people with the least time. They’re not opening dashboards. They’re opening email chains and hopping on calls.

The intelligence systems that actually compound – the ones that still show ROI in year five – are the ones that put the right information in the seller’s workflow without asking them to do anything differently. Not a new login. Not a new report. A brief that’s already in their inbox when they need it.

Design for the workflow, not the data model. The data model is the easier problem.

Lesson Two: AI on Imperfect Data Beats Waiting for Perfect Data. Every Time.

I’ve watched publishers spend two years “building the data foundation” before they were willing to deploy any intelligence system. By the time the foundation was built, the market had moved, the team had turned over, and the original use case had changed.

Here’s what 14 years of production deployment has taught me: the foundation is never done. Data quality issues persist indefinitely. Schemas evolve. New sources get added. Something is always broken somewhere.

The publishers who’ve gotten the most value from intelligence investments are the ones who deployed against imperfect data, learned from the outcomes, improved the data architecture iteratively, and compounded their investment over time.

Sales Agent AI deployed against a partially clean CRM is infinitely more valuable than the perfect CRM that doesn’t have an intelligence layer yet. Yield optimization deployed against incomplete bid log data catches more revenue leakage than a yield tool that’s waiting for the data warehouse migration to complete.

Ship imperfect intelligence. Improve it in production. The alternative – waiting – has a cost that almost nobody models explicitly but that consistently exceeds the cost of imperfect deployment.

Lesson Three: The Compound Effect Is Real, But Only If the Architecture Supports It.

Intelligence compounds when it’s connected. Audience intelligence that feeds yield intelligence that feeds seller intelligence that feeds measurement that feeds back into audience modeling – this is a system that gets smarter every cycle. Every campaign run, every deal closed, every impression delivered is training data for the next decision.

Point solutions don’t compound. They optimize in isolation. Your yield tool gets better at yield. Your measurement platform gets better at measurement. But they don’t know about each other, so the improvements don’t interact.

The publishers who’ve built connected intelligence architectures over five or more years are operating in a different capability tier than the ones who’ve accumulated best-of-breed point solutions. Not marginally different. Structurally different.

Architecture first. Everything else follows.

Lesson Four: The Hardest Intelligence Problems in Ad Sales Are the Organizational Ones, Not the Technical Ones.

I’ve seen perfectly good intelligence systems fail because the analytics team built them and the sales team didn’t trust them. I’ve seen yield optimization tools produce demonstrably correct recommendations that nobody acted on because the pricing team felt like the algorithm was stepping on their judgment. I’ve seen seller intelligence systems deployed without any training and then written off as “not useful” when the usage metrics looked bad six months later.

The technology is the easier part. The organizational alignment is where intelligence investments succeed or fail.

What works: involving the people who will use the intelligence in the design of the intelligence. Not as a checkbox consultation. As genuine co-design. ADs who participate in designing their own pre-meeting brief format use the output differently than ADs who receive a brief designed for them by someone who’s never been in a client meeting.

What also works: measuring the outcomes that matter to the people using the system, not the outcomes that matter to the technology team. Prep time saved matters to ADs. Data pipeline efficiency does not. Design the success metrics around the user’s reality, not the system’s capabilities.

Lesson Five: Proof Is the Only Pitch That Works in Ad Sales Technology.

I’ve sat in enough vendor presentations to know how they go. Compelling platform demo. Impressive client list. A slide with a statistic that reads “$XXX million in value delivered” with a footnote that traces back to a methodology that’s hard to verify.

And I’ve watched sophisticated ad sales leadership teams make decisions based on those presentations that they regret within 18 months.

Here’s what actually changes a technology buying decision in ad sales: a proof point that is specific, that relates to an operation similar to yours, and that involves an outcome you can verify.

$250 million in influenced ad revenue at a $8B operation. $1.5 million in annual cloud savings, broken down by specific cost category. 30–40% prep time reduction, measured against a baseline before deployment. 60-day deployment timeline, demonstrated against a real implementation.

These are the things that move decisions. Not because they’re impressive numbers – they are – but because they’re specific enough to be challenged. And when challenged, they hold up.

What the Next Five Years Look Like

The intelligence gap between publishers building connected systems and publishers managing disconnected point solutions is widening. It’s widening now.

The ones who compound intelligence compound advantage. The sellers who walk into meetings with a brief based on real-time signal win at higher rates. The yield systems that incorporate audience sentiment data price inventory more accurately. The measurement platforms offering clean room validation retain the best advertiser relationships.

None of this is magic. It’s architecture, deployed consistently over time, by people who know the difference between a dashboard and a system.

After 14 years, that distinction is the clearest thing I know about this industry. And it’s the thing I’ll keep building toward.

Recent Blogs

Why Your BI Migration Is Failing – and How to Fix It With Automation

April 10, 2026

Your Audience Feels Something When They Watch Your Content. Are You Monetizing That Signal?

April 9, 2026

The Data Platform Modernization Playbook for Life Sciences: Cloud, Governance, and AI Readiness

April 8, 2026

The Super Bowl, the Olympics, and the Art of Not Losing Your Biggest Revenue Moment

April 6, 2026