Most analytics projects fail because operationalization is only addressed as an afterthought. The top barrier to scaling analytics implementations is complexity around integrating the solution within existing enterprise application and integrating the practices across disparate teams supporting them.

In addition, a number of Ops terms are springing up every day, which is leaving the D&A business & IT leaders more confused than ever. This article attempts to define some of the Ops terms relevant for Data and Analytics applications and talks about common enablers and guiding principles to successfully implement the ones relevant for you.

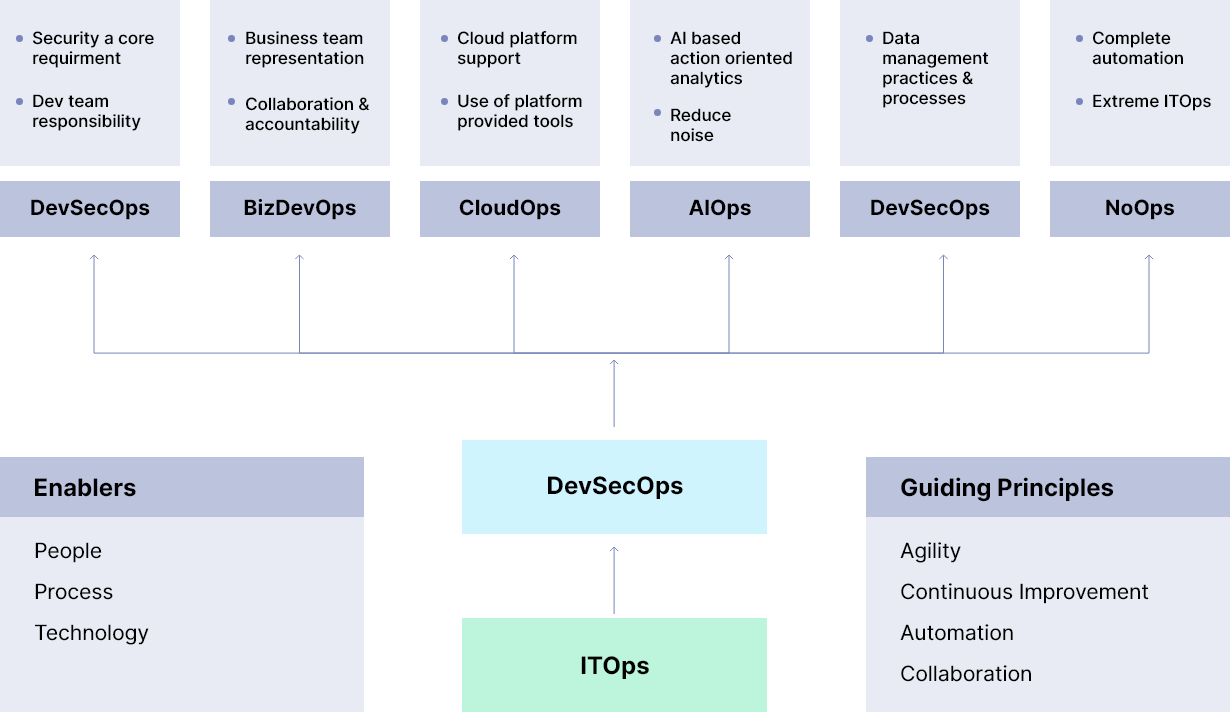

Let’s look at the multiple Ops models below:

Fig 1: D&A Ops Terms

ITOps – The most traditional way of doing the IT operations in any company is “ITOps”. In this, an IT department caters to the infrastructure needs, networking needs and has a Service Desk to serve its internal customers. The department will cover most of the operations like provisioning, maintenance, governance, deployments, audit and security in above three areas. This department will not be responsible for any application-related support. The application development team relies heavily on this team when it comes to any infrastructure-related requirement.

DevOps – With some of the obvious challenges with ITOps , the preferred way of working is “DevOps”. The project teams need to adapt to the processes where there is less dependency on IT team around infrastructure requirements, and the project teams can do the bulk of ops work themselves using a number of tools and technologies. This mainly includes automation of CI-CD pipeline including test validation automation.

BizDevOps – This is a variant of the DevOps model with business representation in DevOps team for closer collaboration and accountability to drive better products, higher efficiency, and early feedbacks.

DevSecOps – This includes adding the security dimension to your DevOps process to ensure the system security and compliance as required for your business. This ensures that security is not an afterthought and it is a responsibility shared by development team as well. This includes infra security, network security and application-level security considerations.

DataOps – It focuses on cultivating data management practices and processes that improve the speed and accuracy of analytics, including data access, quality control, automation, integration, and ultimately, model deployment and management.

CloudOps – With increasing cloud adoption, CloudOps is considered a necessity in an organization. CloudOps mainly covers infrastructure management, platform monitoring and taking predefined corrective actions in an automated way. Key benefits of CloudOps are high availability, agility and scalability.

AIOps – Next level of Ops where AI is used for monitoring and analysing the data within multiple environments and platforms. This combines data points from multiple systems, defines the corelation and generates analytics for further actions, rather than just providing the raw data to Ops team.

NoOps – This is the extreme case of ITOps where there is no dependency on the IT personnel and entire system is automated. Good example of this is serverless computing in cloud platform.

Let us now look at the common guiding principles and enablers which are relevant for all these models as well for any new Ops model which may be defined in the future.

Guiding principles:

- Agility – The adopted model should help increase the agility of the system to respond to the changes with speed and high quality.

- Continuous improvement – The model should be able to take into consideration the feedback early and learn from the failures to improve the end product.

- Automation – The biggest contributor is the automation of every possible task that is done manually to reduce time, improve quality and increase repeatability.

- Collaboration – The model is successful only when various parts of the organization are working as a singular team towards one goal, and are able to share all knowledge, learnings and feedbacks.

Enablers – There are multiple dimensions on how any model can be enabled using the principles mentioned above.

- People – There is a need to have a team with the right skills and culture, and which is ready to take on the responsibility and accountability to make this work.

- Process – Existing processes need to be optimized as required or new processes should be introduced to improve the overall efficiency of the team, and to improve the quality of the end product.

- Technology – With the focus on automation, technology plays a key role where it enables the team for continuous development and release pipeline. This will cover various aspects of the pipeline like core development, testing, build, release, deployment etc.

Amongst the ones you see above, which Ops model works best for you will depend on the business requirements, application platform and skills availability. It is clear that the Ops model is not optional going forward and one or more DevOps models are required to improve agility, automation, operational excellence and productivity. It requires proper planning, vision, understanding, investments and stakeholders buy-in to achieve desired success with any of the chosen Ops models.

References –

Recent Blogs

The Future of AI in Pharma: 6 Shifts Across the Lifecycle

May 14, 2026

PMSA 2026 – Turning AI Momentum into Measurable, Sustainable Advantage in Life Sciences

May 11, 2026

The $1.7 Trillion Shelf Crisis: How AI-Powered On-Shelf Availability Is Rewriting Retail’s Biggest Loss Story

April 16, 2026

From Manual Audits to Machine Vision: How AI Store Operations Are Eliminating Retail’s Execution Gap

April 15, 2026